httperf web server performance test

Deploying a web server in your local intranet is quite easy and fast. Highly used Apache web server can be deployed in minutes. However there is a large difference in deploying a web server for your intranet use, and deploying a web server for a high traffic internet website.

Deploying Apache or any other web server, for your high traffic production website requires some decisions to be made like whether you want MPM prefork or worker,ram used per process,keep alive time,caching,load balancing,etc etc.

But above all in order to conclude and confirm the performance of the web server, an administrator needs to perform some tests. There are variety of tools available in the market to do such tests, those tools are called as performance/benchmark testing tools. Our topic of interest in this post is one such tool called "httperf"

An introduction to httperf

David Mosberger from Hewlett-packard, came up with httperf for performance benchmarking a webserver.

This tool can be used to create a specific workload on the server to test its performance. You can measure the response rate of the webserver, when its operating at its full load.

httperf is implemented in C, for keeping its performance high. Its made by keeping the idea of relying less on the base operating system.

We will test this tool, on a virtual machine with the following configuration.It supports both HTTP Version: 1.0 & HTTP Version: 1.1

RAM: 512MB

Model Name : Intel(R) Core(TM) i5-2520M CPU @ 2.50GHz

Cpu MHz : 2493.706

Cache Size : 3072 KB

Server version: Apache/2.2.22 (Unix)

Let's begin our web server performance test, by looking at some example usage of httperf.

[root@myvm1 ~]# httperf --hog --server 192.168.159.128 httperf --hog --client=0/1 --server=192.168.159.128 --port=80 --uri=/ --send-buffer=4096 --recv-buffer=16384 --num-conns=1 --num-calls=1 Maximum connect burst length: 0 Total: connections 1 requests 1 replies 1 test-duration 0.006 s Connection rate: 168.1 conn/s (6.0 ms/conn, <=1 concurrent connections) Connection time [ms]: min 6.0 avg 6.0 max 6.0 median 5.5 stddev 0.0 Connection time [ms]: connect 0.6 Connection length [replies/conn]: 1.000 Request rate: 168.1 req/s (6.0 ms/req) Request size [B]: 68.0 Reply rate [replies/s]: min 0.0 avg 0.0 max 0.0 stddev 0.0 (0 samples) Reply time [ms]: response 5.3 transfer 0.1 Reply size [B]: header 197.0 content 8105.0 footer 2.0 (total 8304.0) Reply status: 1xx=0 2xx=1 3xx=0 4xx=0 5xx=0 CPU time [s]: user 0.00 system 0.00 (user 0.0% system 16.8% total 16.8%) Net I/O: 1373.7 KB/s (11.3*10^6 bps) Errors: total 0 client-timo 0 socket-timo 0 connrefused 0 connreset 0 Errors: fd-unavail 0 addrunavail 0 ftab-full 0 other 0

Let's see what each section of the output is saying. This will help us understand the output of our further tests.

Total: This section tells the number of TCP connections that were initiated. In our case, httperf only initiated one connection.

Connection Rate: this tells the input connection speed in connections per second format. And also tells what was the connections that were initiated concurrently, or at once.

Connection Time: This shows the lifetime of a TCP connection. Which means, the time between the connection initiation and connection closure.

Connection Time: this second connection time section indicates the average connection time, if multiple connections were initiated.

Connection Length:This tells the average number of replies each connection has got.

Request Rate: This shows the request rate at which the http requests were issued by httperf.

Request Size: This shows the request size in bytes.

Reply Rate: This tells the rate at which the server replied back to the requests.

Reply Time: This shows the time taken by the web server to respond to the request, and the time taken to receive this reply.

Reply Size: This is similar to the request size. This shows the reply size in bytes.

Reply status: This shows the status code of the reply that httperf got from the server.

Rest of the two sections are for CPU and resource spend by the client in carrying out the tests and errors that were encountered during the test. please note the fact that most of the output sections we saw above are useful only while carrying our multiple requests simultaneously, which we will be doing now.

Note: You will get better results of the test, when httperf is invoked against a server from multiple clients, so that the server starts working in its full capacity.

Lets move on to some higher tests like the following.

[root@myvm1 html]# httperf --server 192.168.159.128 --port 80 --uri /index.php --rate 600 --num-conn 20000 --num-call 1 --timeout 5 httperf --timeout=5 --client=0/1 --server=192.168.159.128 --port=80 --uri=/index.php --rate=600 --send-buffer=4096 --recv-buffer=16384 --num-conns=20000 --num-calls=1 Maximum connect burst length: 283 Total: connections 16562 requests 15447 replies 13300 test-duration 38.218 s Connection rate: 433.4 conn/s (2.3 ms/conn, <=1022 concurrent connections) Connection time [ms]: min 1.3 avg 1015.3 max 8140.0 median 388.5 stddev 1394.7 Connection time [ms]: connect 501.1 Connection length [replies/conn]: 1.000 Request rate: 404.2 req/s (2.5 ms/req) Request size [B]: 77.0 Reply rate [replies/s]: min 179.8 avg 371.9 max 510.5 stddev 110.2 (7 samples) Reply time [ms]: response 524.9 transfer 22.6 Reply size [B]: header 197.0 content 8105.0 footer 2.0 (total 8304.0) Reply status: 1xx=0 2xx=13300 3xx=0 4xx=0 5xx=0 CPU time [s]: user 0.77 system 15.92 (user 2.0% system 41.7% total 43.7%) Net I/O: 2851.8 KB/s (23.4*10^6 bps) Errors: total 6700 client-timo 3262 socket-timo 0 connrefused 0 connreset 0 Errors: fd-unavail 3438 addrunavail 0 ftab-full 0 other 0

in the above test we have used some different arguments for the test.

--uri(uniform resource identifier) specifies the get request file, or the file to get from the server. In the above test we are fetching the index.php file repetdly

--server specifies the server address from which to fetch the http data.

--port specifies the port of the webserver.

--rate specifies the no of requests per second. In our case its 600 requests per second.

--num-connections specifies the number of tcp connections to be made. IN the above method, we are making 20 thousand connections at the rate of 600 http requests per second

--num-call and on each connection an http call is done. Call is on http request and its reply.

--timeout specifies the no of time the client needs to wait for a reply.

You can increase the amount of requests from different clients to analyse the webserver perfornmance after the server gets saturated of its resources.

[root@myvm1 ~]# httperf --server 192.168.159.128 --port 80 --uri /index.php --rate 800 --num-conn 30000 --num-call 1 --timeout 5 httperf --timeout=5 --client=0/1 --server=192.168.159.128 --port=80 --uri=/index.php --rate=800 --send-buffer=4096 --recv-buffer=16384 --num-conns=30000 --num-calls=1 Maximum connect burst length: 1536 Total: connections 16159 requests 15102 replies 11219 test-duration 45.462 s Connection rate: 355.4 conn/s (2.8 ms/conn, <=1022 concurrent connections) Connection time [ms]: min 96.3 avg 994.8 max 8186.8 median 324.5 stddev 1418.9 Connection time [ms]: connect 541.0 Connection length [replies/conn]: 1.000 Request rate: 332.2 req/s (3.0 ms/req) Request size [B]: 77.0 Reply rate [replies/s]: min 8.6 avg 248.2 max 496.5 stddev 161.9 (9 samples) Reply time [ms]: response 567.6 transfer 20.0 Reply size [B]: header 197.0 content 8105.0 footer 2.0 (total 8304.0) Reply status: 1xx=0 2xx=11219 3xx=0 4xx=0 5xx=0 CPU time [s]: user 1.02 system 16.14 (user 2.2% system 35.5% total 37.7%) Net I/O: 2025.7 KB/s (16.6*10^6 bps) Errors: total 18781 client-timo 4940 socket-timo 0 connrefused 0 connreset 0 Errors: fd-unavail 13841 addrunavail 0 ftab-full 0 other 0

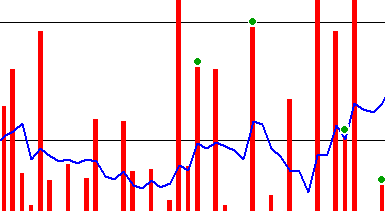

As you can see, as we go in increasing the number of connection rate and tcp connections the reply rate, error count for timout,goes on increasing.

During the test, you can analyse the number of http process spawned at a time.

Sarath Pillai

Sarath Pillai Satish Tiwary

Satish Tiwary

Add new comment